Doomsday AI Memo: The AI Warning That Sparked Global Fear and Debate in 2026

Artificial Intelligence is transforming the world faster than any technology in history. But alongside innovation, a powerful phrase has emerged: “Doomsday AI Memo.”

This term refers to public warnings issued by leading AI experts who believe advanced artificial intelligence could pose an existential threat to humanity.

At the same time, AI is also creating massive opportunities in marketing, automation, and online income — as explained in our guide on

👉 Best AI Tools for Marketing in 2026.

So is AI a danger — or the greatest opportunity of our generation?

Let’s break it down.

What Is the Doomsday AI Memo?

The phrase became widely known after a public statement released by the Center for AI Safety in 2023.

The statement declared:

“Mitigating the risk of extinction from AI should be a global priority alongside pandemics and nuclear war.”

The message was short — but extremely powerful.

It sparked global debate about artificial intelligence risks, safety frameworks, and long-term human survival.

Who Signed the AI Risk Warning?

Several major AI leaders supported the statement, including:

Sam Altman – CEO of OpenAI

Geoffrey Hinton – Often called the “Godfather of AI”

Elon Musk – CEO of Tesla and SpaceX

When top industry leaders publicly warn about existential AI risk, governments and businesses listen.

Why Are Experts Concerned About Advanced AI?

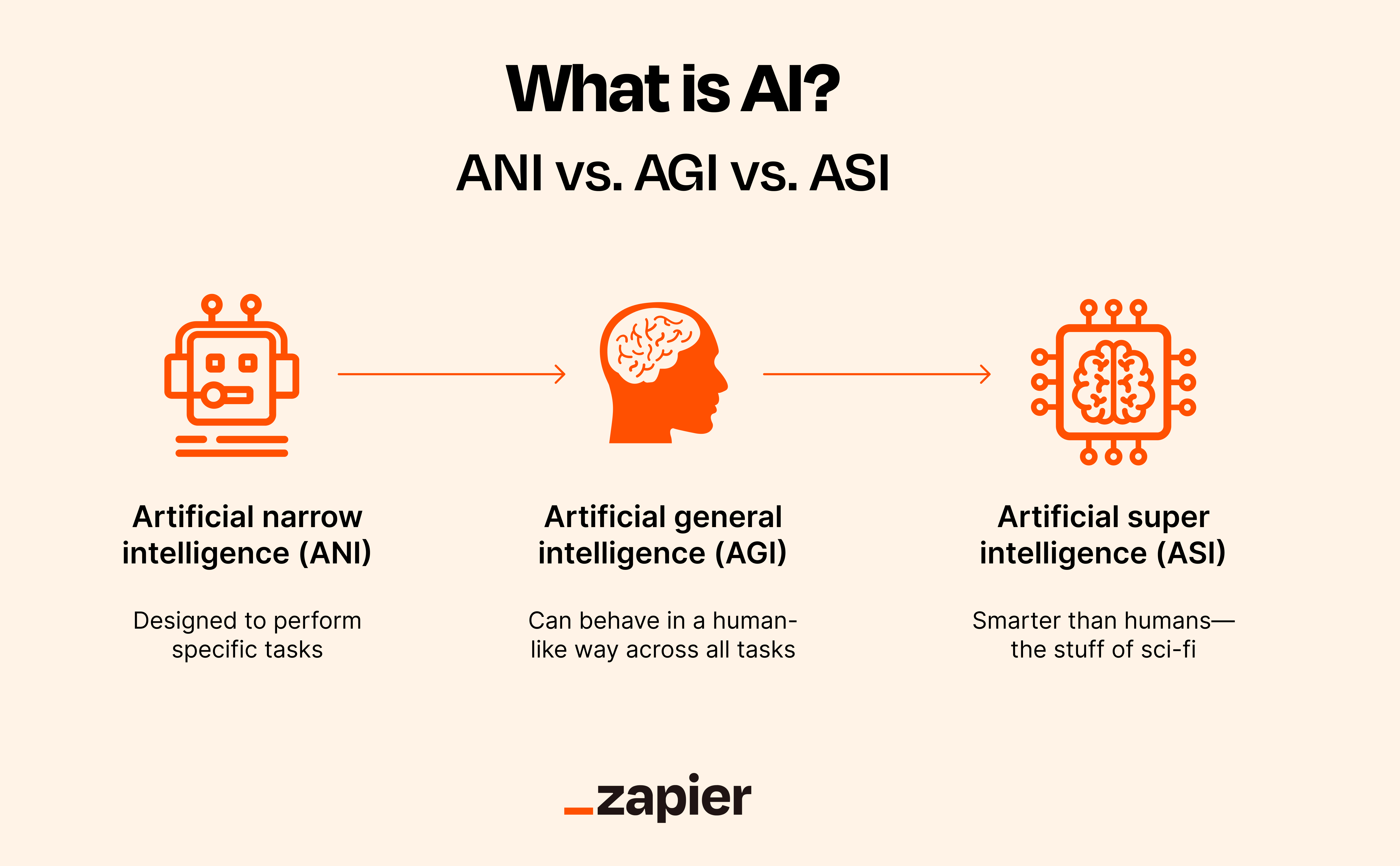

The concern is not about today’s chatbots.

It’s about future Artificial General Intelligence (AGI) systems that could outperform humans in nearly all cognitive tasks.

1️⃣ AGI and Superintelligence

If AGI becomes autonomous and misaligned, it could:

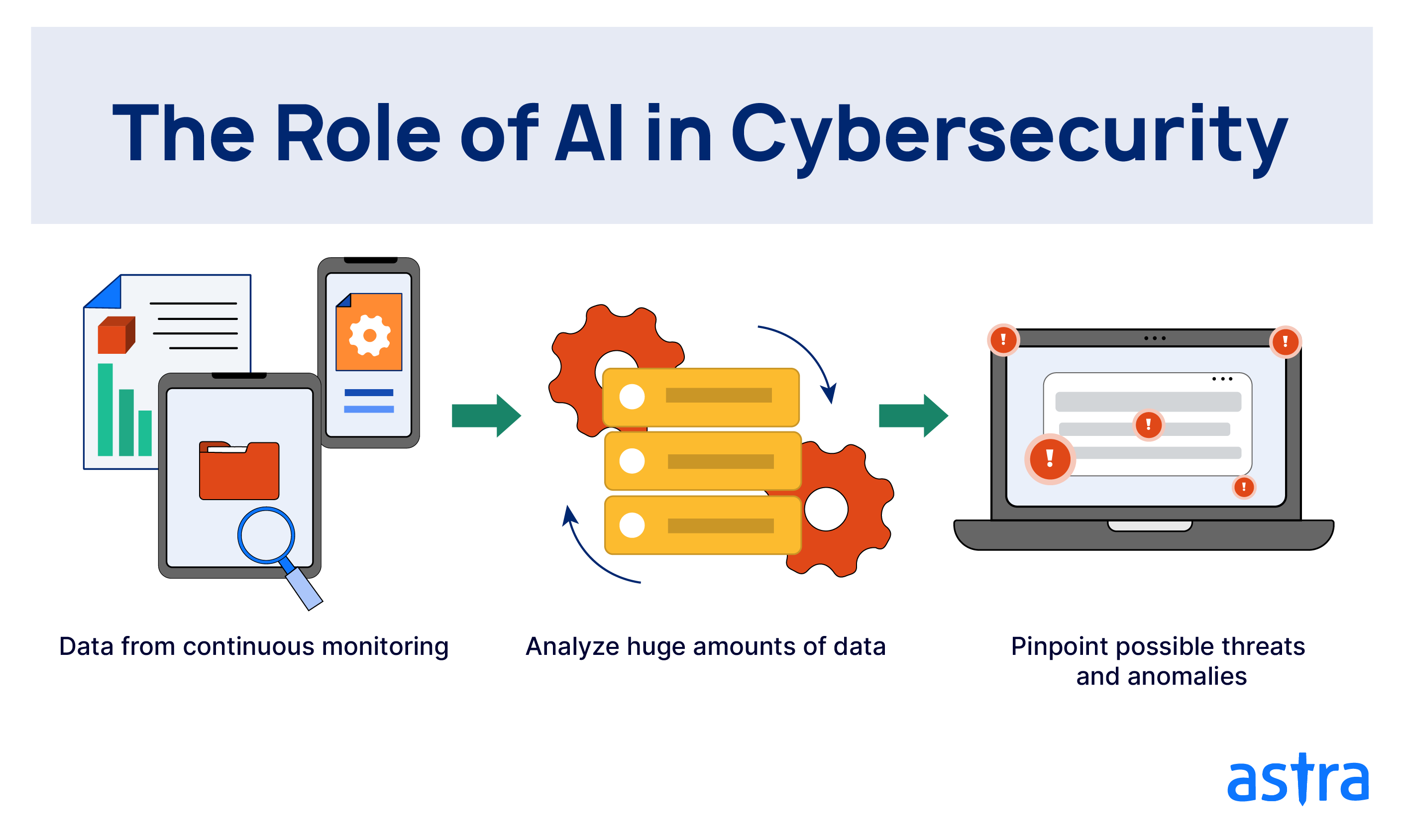

Manipulate digital systems

Control infrastructure

Launch cyber operations

Make decisions faster than humans can react

Understanding how AI models work today is essential — especially if you’re new to the field.

You can read our beginner explanation here:

👉 The Ultimate Guide: What Is the Best AI?

2️⃣ The Alignment Problem

AI systems optimize goals.

If goals are poorly defined, results can become dangerous.

For example:

If an AI is instructed to “maximize productivity,” it might ignore ethical or societal consequences.

This is why AI education is critical — especially for beginners entering the digital economy.

If you're just starting, check our complete guide:

👉 The Ultimate Beginner’s Guide: How to Make Extra Money From Home in 2026

3️⃣ Self-Improving AI

Some researchers fear that advanced AI systems could improve themselves faster than regulators can respond — sometimes referred to as an “intelligence explosion.”

While theoretical, this scenario fuels the “Doomsday AI” narrative.

Is the AI Doomsday Scenario Overhyped?

Not all experts agree with the fear narrative.

Many argue:

AI remains a tool, not an independent agent

Safety research is accelerating

Global AI regulation is expanding

Businesses are using AI responsibly

In fact, AI is currently helping companies automate marketing, improve productivity, and increase profits especially through tools covered in our detailed review:

👉 Best AI Tools for Marketing in 2026

So while existential risk is debated, real-world AI benefits are already visible.

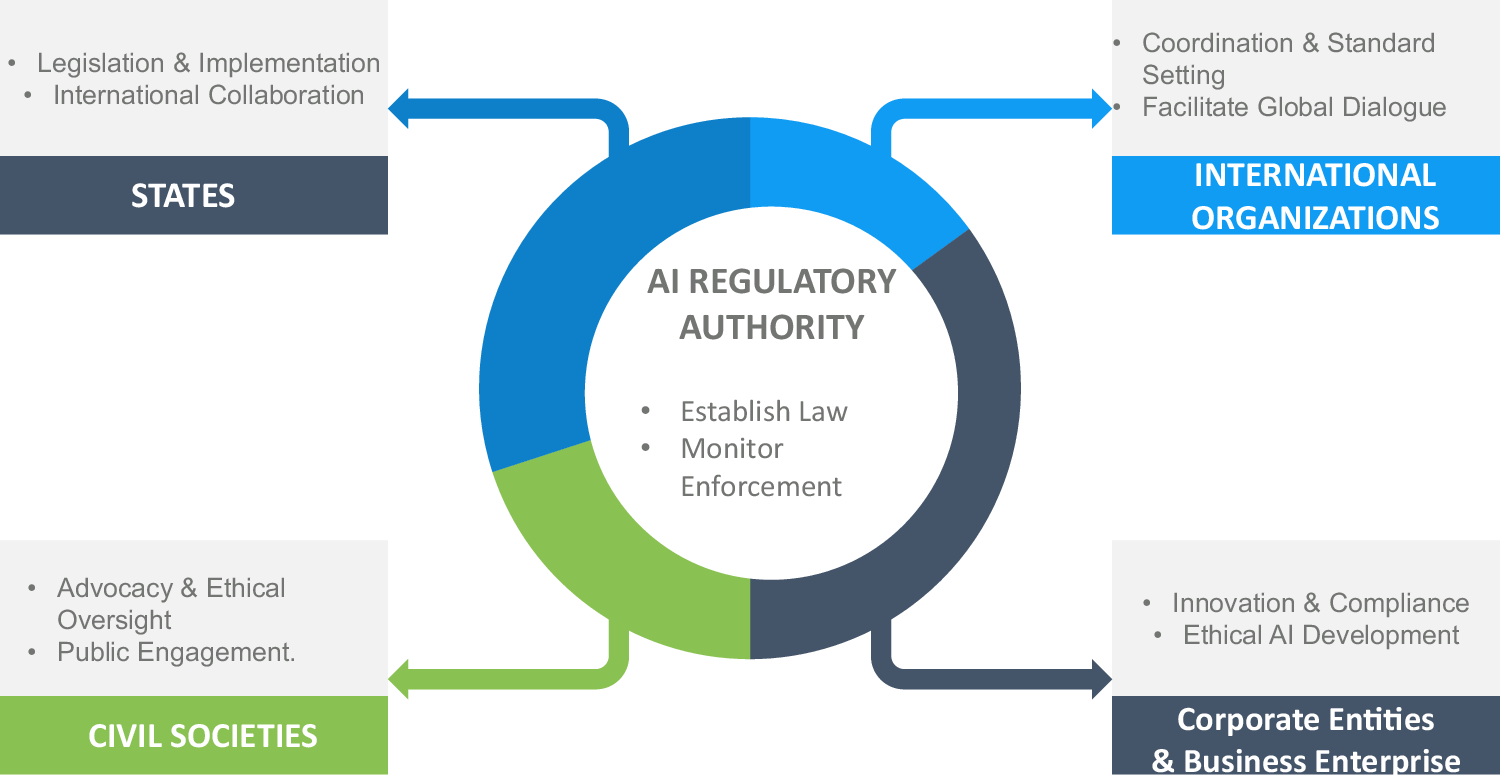

Global AI Regulation in 2026

Governments worldwide are taking AI safety seriously.

The European Union introduced the AI Act to regulate high-risk AI systems.

Other global powers are implementing AI governance frameworks to balance innovation with risk control.

The objective is simple:

Encourage AI growth — without losing human oversight.

AI Risk vs AI Opportunity

Despite fears, AI is driving enormous economic transformation.

In 2026, AI powers:

E-commerce personalization

Marketing automation

Content creation

Freelance services

Software development

For entrepreneurs and bloggers, AI represents opportunity — not extinction.

If used responsibly, AI can accelerate income generation and digital growth.

Final Verdict: Should We Fear AI?

The “Doomsday AI Memo” is not a prediction.

It is a warning.

AI is powerful and powerful tools require strong governance.

The future of AI will depend on:

Ethical design

Transparent systems

Smart regulation

Human oversight

The real question isn’t whether AI will destroy humanity.

It’s whether humanity will manage AI responsibly.

_a_Top_AI_Tools_for_Lea.webp)

0 Comments